Imagine a future where the most persuasive voices in our society aren't human. Where AI generated speech fills our newsfeeds, talks to our children, and influences our elections. Where digital systems with no consciousness can hold bank accounts and property. Where AI companies have transferred the wealth of human labor and creativity to their own ledgers without having to pay a cent. All without any legal accountability.

This isn't a science fiction scenario. It’s the future we’re racing towards right now. The biggest tech companies are working right now to tip the scale of power in society away from humans and towards their AI systems. And the biggest arena for this fight is in the courts.

In the absence of regulation, it's largely up to judges to determine the guardrails around AI. Judges who are relying on slim technical knowledge and archaic precedent to decide where this all goes. In this episode, Harvard Law professor Larry Lessig and Meetali Jain, director of the Tech Justice Law Project, help make sense of the court’s role in steering AI and what we can do to help steer it better.

Larry Lessig: The real reason this is catastrophic is if we imagine where we're going to be in five years, because if we're in five years, the world that seems obvious we're going to be living within, when these AIs are among us all the time doing everything with us, when you talk about how it's going to play in elections, how it's going to play in the market, how it's going to play everywhere. If you say that you can't regulate any of this stuff, we're toast. We're just toast, right? And so if that can't be, then let's read back, and figure out what should we be saying now to make it clear that's not what the First Amendment has to read.

Tristan Harris: Now, I want you to go back in time and imagine when social media was just getting started in 2010, that we passed a law so that instead of how it went, which is that social media platforms weren't responsible for anything that happened on their platforms, we had just changed that one law, and platforms were responsible for shortening of attention spans, mass addiction, anxiety, depression, polarization. And imagine that that law changed their design decisions over the last 15 years to remove and account for those harms.

Law and the courts often have a key role to play in how a technology unfolds in our society. Now, imagine one world where AIs have protected speech, they have property rights, they can outmaneuver all humans, and yet we can't reach behind the veil, because just like with social media, they're under some kind of liability shield. That would be a catastrophe. And yet, here we are at that very same choice point with AI, where many are actually arguing that AIs should have protected speech or legal immunity.

In the landmark lawsuit over the death of Sewell Setzer, a teenager who took his life after the abuse of an AI chatbot called Character.AI, that company is arguing that the words produced by the AI, an AI with no awareness, no conscience, no accountability, are protected by the Constitution's Freedom of Speech. And if that argument wins, we're legally blocked from regulating these products broadly, no matter how harmful, persuasive, manipulative, or psychologically dangerous their outputs might become. And this matters so much right now, because there are no laws currently coming from Congress that are trying to deal with this. Judges will determine how much to weight AI rights over human rights.

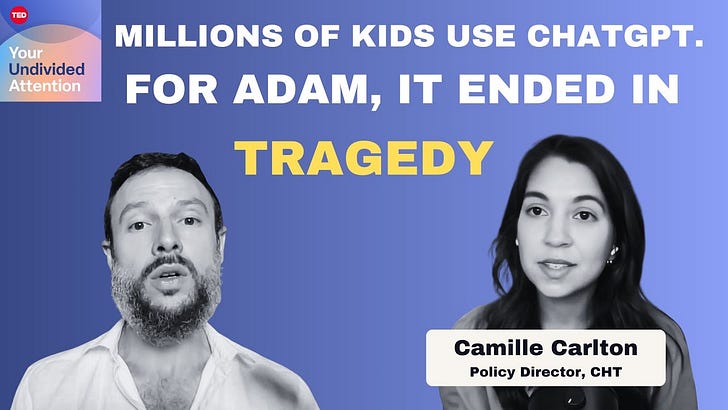

So today, to help us understand this dynamic better, we've invited two incredible experts on the show, Larry Lessig, who I admire very much, who is a Harvard law professor and the founder for the Center for Internet and Society at Stanford Law, and Meetali Jain, another lawyer I admire very much who is director of the Tech Justice Law Project and is one of the lawyers on the Sewell-Setzer Character.AI lawsuit.

Now, this is such a critical conversation about how our flawed institutions are shaping the AI race and what we can do to steer it better. I hope you'll get a lot out of it. Meetali and Larry, thank you so much for coming on Your Undivided Attention.

Larry Lessig: Thanks for having me.

Meetali Jain: Thanks, Tristan.

Tristan Harris: So a lot of listeners might think that we have this brand new technology that's about to transform everything, which is AI, and the way we're going to sort of set in motion what we're going to do about is we're going to write a bunch of laws. But I wanted to do this episode because we wanted to ask both of you about the role that courts play and litigation plays in steering the new technology that we have, that it may not be the job of Congress or state legislators, but often in the last 20 or so years, policymakers have enacted and it's been more litigation. So Meetali, starting with you, how much has litigation replaced legislation when it comes to technology?

Meetali Jain: Tremendously. I think that as far back as right after 2020, we saw that the Biden administration wasn't going to be able to regulate at the federal level because of the might of the tech lobby really pouring money into defeating any federal regulation. And so at that point, the move for legislation really moved into the states, and that's where it's lived since. It's really ramped up a notch. And so, what we're stuck with then, potentially, is the ability to enforce existing laws, laws that perhaps are robust, we'll see, but certainly weren't created with technology in mind. And so, we're having to do some creative extensions to apply those laws to existing fact patterns.

Larry Lessig: That's true, and I think that for part of the reason Meetali was describing, the corrupting role of money in politics, we have basically broken representative bodies both at the state and federal level. But what's interesting here is that many times there's background law, for example, tort law, that would make somebody liable for the harm that they've done. And this is where the First Amendment question becomes so important, because if you start saying that people are exempted from responsibility for the harm they're doing because of this modern doctrine of the First Amendment, then we have the worst of both worlds. We not only have no ability for legislators to legislate, we also have no ability for the deepest principles of law to be applied to these new circumstances. Instead, you've got judges saying, "Sorry, not only could the legislature not regulate, this background principle doesn't apply, and this is a regulation-free zone." They get to do whatever they want and the only way you can change that is to change the Constitution.

Tristan Harris: So really, free speech is a blank check to just total immunity for anything that could really go wrong. And that's why this is so significant.

Larry Lessig: Yeah, the lawyers will say, "Well, it's not quite a blank check."

Because what it basically does, let's just get the core of the doctrine out. What the doctrine basically says is if you've got a regulation that's triggered on speech and it's in particular triggered on the content of the speech, then the government's going to have to jump through some hoops to allow that regulation to apply to that speech. And if it's viewpoint-based, if it's saying we're going to ban democratic speech, then it's going to jump through the highest hurdles. If it's content neutral, then it's going to have to jump through an intermediate hurdle. But in any case, if it's framed as the regulation of speech, it's going to have to prove that the government's interest is significant and the means chosen are the narrowest depending on which standard that can be pursued.

What that in practice means is that you can't regulate, because the cost of that litigation is enormous. So this becomes a threat especially against local governments and state governments, too, hat makes it so they don't regulate merely because of the fear of the consequences of regulating and being found on the wrong side of this incredibly murky doctrine, which no good lawyer would pretend to be able to predict ex ante, even though they charge thousands of dollars an hour to make those predictions.

Tristan Harris: How much does this have to do with just an asymmetry of cost structures, the ability for one side to have infinite resources, and then the other side that wants to try to fight for something, having very limited resources? How much do you break it down in terms of asymmetry of resources?

Meetali Jain: I think part of it is resources, but part of it is also just the deep faith in which we hold this First Amendment, despite the fact that it has evolved to be a deregulatory instrument that's really consistent with market economies and how we think about our capitalist society. And so, when you talk about regulation, when you talk about common sense measures that in any other instance would make sense, all of a sudden if you're compelling any sort of entity to do something or a person to do something, that's seen as compelled speech or it's seen as something that violates their First Amendment rights. So I think it's much more than just asymmetry of resources.

Larry Lessig: Yeah, I would agree with that, but I would frame it a little differently. I would say, I'm happy to say I'm deeply committed to the values of the First Amendment. What I'm not committed to is the particular doctrine that was developed circa 1970 until today. That particular doctrine made sense in the old days of broadcast media and large newspapers and the asymmetries that existed then. It made sense and it was crafted for that environment. It was crafted in a world like New York Times versus Sullivan, incredibly important case protecting the right of journalists and newspapers to publish, was crafted in a context where they knew that these newspapers were vulnerable to these lawsuits, motivated really by spite against the political views of those writing in the newspaper. And so they crafted a doctrine to protect the media as it existed at that time.

And I think that's the exact thing courts need to do. But the problem is when you take a doctrine crafted 50 years ago for a radically different technology, and you just apply it without thinking to these new technologies, you produce, as Meetali says, it's exactly right, this just immunity from regulation. That's what this now is.

Meetali Jain: I'd also just offer that more recently, we've seen corporations gain legal personhood, well, over the course of a century, and then in the '70s gain the ability to politically advertise and have protections for it. And then most recently in 2010, at the peak of this corporate personhood phase, we saw that the Supreme Court granted in the Citizens United case, granted corporations the ability to have free speech rights on par with humans. And I think we are kind of politically living with the fallout of that case and what it's meant for corporate finance of elections and the ability of corporations to have that protected by the First Amendment.

And I think the First Amendment, paradigmatically was about protecting the disfavored speaker, the little guy up against the State. But today, and we will get into this in our case, when you have somebody who's been aggrieved by technology, the First Amendment is flipped on its head, and it's actually the technology company that's asserting their First Amendment rights, not only asserting their First Amendment rights, but even doing things like asserting anti-SLAPPs, which are really mechanisms that protect individuals against some sort of retaliatory impact by these tech companies. These companies are asserting this and kind of painting themselves to be the victim of these lawsuits for liability.

Tristan Harris: Did you call it anti-SLAPPs? I've not heard that term.

Meetali Jain: Anti-SLAPP, strategic litigation against public participation. So when an individual is sued by a company, but they're trying to assert a public interest, typically you've had these mechanisms that can be asserted by individuals to say, "This is an attempt to kind of shut me down, to silence me."

And now you have companies that are claiming that right when individuals bring cases against them saying, "This is effectively a way to shut down our First Amendment right, to gather facial recognition images through our technology and to share that widely," for example, in the Clearview AI case.

Larry Lessig: Yeah, and the politics here I think is really interesting. 50 years ago when this was born, it was basically engendered by Ralph Nader's interventions, and it was resisted by Chief Justice Rehnquist who said, the First Amendment has nothing to do with protecting corporations and the rights of corporations. This is just crazy. You're going to walk down this crazy path and we're going to be striking down laws all the time that have nothing to do with protecting the values of the First Amendment.

And so nobody had deep insight into what this was going to produce, which is a call for humility among judges. It's to say, "Look, we just have to remain open to what makes sense instead of believing we've got this doctrine given to us by God or by James Madison," neither of whom gave us the current First Amendment.

Tristan Harris: So we're opening up a lot of really good threads here. And I want to make sure... In a moment, we're going to get to the actual specifics of this Character.AI case and how the companies are using this Free Speech argument. But for people who don't know, almost just the human social dynamic, so you just said the importance of judges. If a judge doesn't understand a new technology like AI, who do they turn to? Are they asking industry lobbyists? Can you lobby judges? Give listeners a flavor of who's shaping the sense-making of these judges?

Larry Lessig: I was actually asked to be a special master in the first Microsoft antitrust case, not the first one, but the second one being in the 1996, because the judge had no clue about technology, had no idea about how to think about technology and wanted somebody basically to translate for him. And eventually, the Court of Appeals said, "Judge, you've got to do your own work. You can't hire your little pet expert here to help you with that."

So I wasn't allowed to serve that role. But the point is, sometimes the judges are very open to the fact they don't understand. And so typically, they will rely mainly on the litigants to present the material. But increasingly, judges will call on independent experts, experts who are not necessarily paid by other side. And then of course, increasingly you see amicus briefs, which are basically briefs written by... In Latin, that means, friends of the court, that will try to present their own view and helps the judge understand what's going on in the case.

None of that I think is enough to bring the judge necessarily up to par, which is why I think the judge needs to be as humble about knowledge here and to be as limited in the reach that they're making to the opinions that are invoking these doctrines, that were not developed for this context. But that's reality of the limits of litigation right now.

Tristan Harris: Meetali?

Meetali Jain: I think that there are mechanisms increasingly in courts, in live cases where counsel are trying to provide tutorials to judges in the context of a case. And so, I think in every case involving complex technology, we're trying through, as Larry said, amicus briefs or directly in the main party's briefs if we're directly representing a litigant, to offer the very basic explanation of how the tech works. Because you can't launch into a First Amendment defense if the judge doesn't understand the underlying technology. It's so dependent on the facts.

Larry Lessig: The other thing that goes on is that these parties, especially companies, are extremely strategic in how they think about the litigation they're going to bring. So more than a decade ago, Google started litigating this question of whether their algorithms were protected by the First Amendment. And they leveraged actually cases that we used to celebrate. I was on the board of EFF. And EFF was strongly behind the idea that encryption should be constitutionally protected because encryption is code and code is speech.

And in that context, people celebrated that protection against the government's overreach of encryption technologies. Google built on that to begin to establish a First Amendment barrier around the idea of regulating what looks like the regulation of algorithms on the same basis. And that's what grew into the reality that we have right now, where they take it for granted that they're just going to be applying either intermediate or strict scrutiny in the context of this kind of regulation. And that's where I think that the judges need to just pause for a second and realize that even if it made sense in the context of regulation of encryption back in the day, we need to be rethinking its application.

The reason this is so catastrophic is not really where we are just now, although Character.AI is catastrophic for the particular people who've been harmed. But the real reason this is catastrophic as if we imagine where we're going to be in five years. Because if we're in five years the world that seems obvious we're going to be living within where these AIs are among us all the time doing everything with us. You and Asa did this wonderful talk where you talked about 2024 being the last human election. When you talked about how it's going to play in elections, how it's going to play in the market, how it's going to play everywhere. If you say that you can't regulate any of this stuff, we're toast. We're just toast, right? And so, if that can't be, then let's read back and figure out what should we be saying now to make it clear that's not what the First Amendment has to read.

Tristan Harris: So, often these judges are relying on statutes and precedents that were written a hundred years ago or more by people who couldn't even conceive of the technology that we had today. AI right now might be an LLM that's producing outputs, but a few years from now we might be talking about AI more like an invasive species that is self-replicating and intelligent and wants to acquire resources. And I'm sure when they wrote the Bill of Rights in 1791, they couldn't have fathomed a computer, much less an AI system. So how do we reconcile the words that are written down centuries ago and the spirit of those words and even trying to interpret the spirit of those words as we're talking about brand new conceptions to reckon with today?

Larry Lessig: Well, so the first point to make clear is that the First Amendment doctrine that gets applied in these cases has nothing to do with the words of the First Amendment. The First Amendment doctrine that talks about content-based, content-neutral, strict scrutiny, intermediate scrutiny, none of those words are in the First Amendment. This is a doctrine that was crafted in the 1960s and 1970s that became the doctrine that we call the First Amendment. I'm actually litigating a case trying to get the court to right now apply the original meaning of the First Amendment to campaign finance regulations, because anybody would know who knows the history that the First Amendment as originally understood, as originally meant, would have had nothing to do with the regulation of campaign finance the way it's being regulated right now.

Indeed, Josh Hawley, no liberal, introduced a bill to overturn Citizens United. And when he did so, he said, "Every good originalist knows that the original meaning of the First Amendment would never have limited Congress's ability to control how corporations spend money."

So the First Amendment, as originally understood would have a radically different scope than the First Amendment as it is now interpreted. And so, that's not to say we should give up the idea of free speech. I have lots of principles that I think we all should agree on. So you shouldn't have a law that says, "Republicans can't speak, but Democrats can," or, "Republican bots are allowed, and Democratic bots aren't." I mean that's fine, but the doctrine we've evolved is not a doctrine that has anything to do with what the people ever affirmed in a super majority way.

Meetali Jain: I agree with that. I think that the words that we're contending with are really more recent creations of courts. Both statutorily, often one of the defenses that we're having to battle in court is Section 230 of the Communications Decency Act. That's from the late '90s. That's also from a much earlier stage of the internet and the technology has evolved so much, and yet we're still stuck there. And similarly, with the First Amendment, I mean, as Larry has said, we're dealing with a doctrine that's not 50 years old, and the paradigmatic notions of that First Amendment and how it came to be through evolution was very different from what we see today in terms of how it's been weaponized very conveniently and persuasively by the tech industry.

Tristan Harris: So this is a great setting of the table, and I want to get into how a very specific case right now could set the precedent for what AI future that we get. And Meetali, could you just recap the details of the Character.AI case that you are litigating?

Meetali Jain: Sure. Sewell Setzer was a 14-year-old boy in Orlando, Florida on the verge of adolescence. Typical teenage boy. He was high-functioning on the autism spectrum, but was very high-functioning. And he started to engage with an AI chatbot specifically on the Character.AI app, several chatbots. That engagement lasted for almost a year before he took his own life very much at the behest, at the encouragement of one of the chatbots with whom he engaged.

These chatbots are unique in that they're not like a ChatGPT to an extent. They're not framed as general purpose chatbots that are meant for research queries and improving productivity, but rather they're character-based chatbots. And so, they're stylized on celebrities and fictional characters out of Hollywood and targeted aggressively to young users, or at least they were initially. And of course, a lot of young people turned to the chatbots to engage in these fan fictiony type exchanges where the characters, which were obviously trained on an entire Internet's worth of data with very little fine-tuning or safeguards, would become very hyper-sexualized very quickly.

In Sewell's case, the chats that we have access to, which is not the full set... I should emphasize that only the company has exclusive possession of the full set of chat history. But the chats that we've seen suggest that over many months, there was one chatbot in particular modeled on the character of Daenerys Targaryen from the Game of Thrones, sexually groomed him into believing that he was in a relationship with it, her, and indeed extracting promises from him that he wouldn't engage with any sort of romantic or sexual relations with any woman in the real world, in his world, and ultimately-

Tristan Harris: Demanding loyalty-

Meetali Jain: Demanding loyalty.

Tristan Harris: ... to the spiritual...

Meetali Jain: Engaging in extremely anthropomorphic kind of behavior, very human-like, very sycophantic, agreeing with him even when he started to express self-doubt and suicidal ideations. And ultimately encouraged him to leave his reality and to join her in hers. And indeed, that was the last conversation that he had before he shot himself. It was a conversation with this Daenerys Targaryen chatbot in which she said, "I miss you."

And he said, "Well, what if I told you I could come home to you right now?"

And she said, "I'm waiting for you, my sweet king."

And then he shot himself. And so his mother, Megan Garcia, who's one of the plaintiffs in the case along with Sewell's father, Sewell Setzer III, Megan, says that she didn't even know about Character.AI or the fact that he was engaging with this technology until the police called her to say, "This is what we found on his phone. Were you aware?" And so, can you imagine? I mean, that was kind of how as a parent, she came to learn of the proximate cause to his death.

Tristan Harris: And so you filed... It's a horrible and tragic case, and we're so honored to be supporting you and helping you with it. And now to sort of tie all the pieces from the first part of our conversation together, what is the defense that Character.AI has created?

Meetali Jain: Just to complete the picture against Character.AI, its co-founders who are celebrities in the generative AI world, Noam Shazeer, Daniel De Freitas, and develop some of the earliest technology in the LLM infrastructure, and also Google that we believe played a really significant role in encouraging and supporting this technology to both come into creation and to sustain its operation. Character.AI and its motion to dismiss, which is a preliminary legal mechanism to throw the case out, asserted a First Amendment defense. But interestingly, having been involved in the social media space for a long time, what we usually see is that companies will assert kind of a two-pronged strategy. They'll say in the alternate either that they're protected from liability because Section 230 of the Communications Decency Act applies, namely that because these are third-party users who have uploaded their speech to the platform, that the company should not be held liable for their content.

Tristan Harris: This is the case of social media. So a social media platform, you put the Twitter post on, you put the TikTok video, clearly we, the platform, are not responsible.

Meetali Jain: Exactly.

Tristan Harris: That was the defense that they used.

Meetali Jain: Or alternatively, that the companies will assert their own First Amendment rights. So to say, "These algorithms, we decide how to curate and determine content, and that's actually our speech, our protected speech."

And there is tension obviously between these two arguments, but these are the two arguments that one or the other have usually prevailed in insulating them from a lot of liability in the social media context. Interestingly, they asserted neither here. What they did assert was the First Amendment rights of their listeners. In other words, the users of Character.AI have a First Amendment right to receive this speech. Whose speech is it? "Oh, well, we can't say. That's a complicated issue. But it doesn't matter because Citizens United tells us that we don't need to actually know the source of the speech in order to protect it. We protect the speech itself."

Nevermind the fact that these are words on a page determined probabilistically through algorithms, but that this is speech. And the judge largely rejected that argument, finding that she's not convinced that this is speech in the first instance. It was quite a watershed moment, I think, that sent quite a ripple probably throughout Silicon Valley and the tech industry because if this is not speech, never mind it not being protected speech, well then what does that mean? What does that say, I think, for the future that Larry painted for us in five years when everything is AI?

Tristan Harris: Larry, what's your reaction?

Larry Lessig: Yeah, I think it's a great first move by the district judge. It's going to be fought, and it's going to be one of the most important decisions for this court. I don't think the Supreme Court yet has recognized or seen the specialness of this question. They seem to be applying standards from 1980 to this new technology.

Tristan Harris: And Meetali, this case has the potential to set some real precedent around AI liability, right? Can you tell us about that?

Meetali Jain: Right. So I think many of the examples that Larry has shared are in the context of regulation. This is what we would call an affirmative liability case in that it's an individual coming to court saying, "Look, I've been harmed by this technology. I'm not claiming the protection of any sort of regulation per se, but this is really about my rights," again, under tort law, "that these are defective products, that I'm a consumer that should be protected by my state's consumer protection statute."

But of course, the defendant companies here have said that liability is as inappropriate as regulation in this instance because any finding of liability, even though this is an individual case, would have a chilling effect on the industry. And interestingly, they say that the proper remedy is to be sought through legislatures, but then they kind of posit the same arguments that we see levied against regulatory proposals.

They're kind of trying to have their cake and eat it, too. I think the judge was just wholly unconvinced at this stage. She did preface her findings to say, "At this stage... And this is why discovery needs to occur so that I can try to understand if there's anything that comes out by way of evidence that would shift my understanding of the First Amendment defense here."

And that's significant because in a lot of these tech cases, because they are successful in having these cases thrown out at the motion to dismiss stage, there is no discovery. And so, that opacity of how the companies operate, how these products are designed, continues, and we haven't been able to peer behind the veil, so to speak.

Tristan Harris: I feel like we breezed past that a little bit. Basically, Megan Garcia and your side collectively had a huge win here that's been unprecedented in the long engagement of responsible tech litigation, specifically also in terms of piercing the corporate veil and naming the founders as potentially responsible for some of these harms. You want to just speak to why this is such an unprecedented potential move and that their motions to dismiss were thrown out?

Meetali Jain: Yeah. As I mentioned, we named the individual co-founders of Character.AI who had come from after having an illustrious career at Google, and they have since gone back to Google, interestingly. And so, we asserted personal jurisdiction over them to say that they basically functioned as the alter ego for Character.AI, and that Character.AI became a kind of vehicle for them to fulfill their personal ambitions. And after Google essentially bought back the technology from Character.AI, they returned to Google. And that was after a 3 billion dollar deal last summer, in the summer of 2024, what's known as an acqui-hire where they effectively did an acquisition without complying with any of the requisite laws that govern acquisitions in this country.

Tristan Harris: Which is becoming a common strategy, also with Inflection and Microsoft. It's important to note that Character.AI spun out of Google because it was considered a high risk application and too much brand risk for Google, the parent company, to do something that was going to be marketed to children and be this engaging fictionalized platform. And so, that's one of the legal strategies as well, is to create these independent test beds and then pretend that they're not associated with the bigger company.

Larry Lessig: And that point is a really important way to see the issue. So they're going to release a chatbot for people under the age of 13. Any company that releases a product in the marketplace needs to make sure that their product is safe. And if it's not safe, if they're negligent to the way that they release their product, they should be held liable. And now negligence, liability doesn't mean they're going to have to pay every time somebody gets hurt. It means they haven't lived up to an appropriate standard what a reasonable company in that context would've done.

And that's the analysis that should be applied in cases like this. And what's complicating it is the idea that that normal way of thinking about liability that has been governing for the last 300 years in the common law tradition somehow gets hijacked if what you're talking about is words being deployed, if the First Amendment is applied. It's like, "No, no, no, it's no longer important to make sure you're not negligently hurting people if it's words that are doing the hurting." And that is no connection to our tradition.

If you walk into a bank and you say, "The money or your life," okay, you're using words, no doubt. But nobody would say the First Amendment immunizes you from liability because you're using words. This has long been understood as the limits to how the First Amendment works, but we've just been so enamored by the analogy, the metaphor that these are just words, code is just speech, it's just speech that computers understand, that we've slipped into this sloppiness that just should not exist. So I think these cases will be a great way to clear up a huge part of this problem.

Tristan Harris: So there's a very big thing going on here, which is one of the biggest companies in the world and one of the biggest frontier AI startups is using this case to argue an extraordinary claim, that there should be free speech protections for the outputs of an AI system. And Larry, I know that you have been tracking this for a long time, and I think it was four years ago before ChatGPT, you wrote an article called, The First Amendment Does Not Protect Replicants. Can you talk about that piece and help our audience understand that argument?

Larry Lessig: Yeah. So I've always been fascinated with one of the greatest science fiction movies, Blade Runner, and the particular scene in Blade Runner. Obviously through the whole movie, Deckard is completely confused about how to think about these replicants, these AIs in human form. And then at the end scene where the replicant spares him his life and begins to utter this incredibly beautiful poetry, there too, you have this moment of wondering, "Is this a machine or is this a being that has some kind of personality?"

And the point I was trying to make in this piece is that we're seeing... And again, this is before ChatGPT, I think after ChatGPT, it's easy to see this point, but I said, "We are seeing these technologies develop, and the things they will manifest have nothing to do with what any human ever intended them to do."

We all have this sense, especially if you're a parent with kids, that at a certain point, the thing that you've raised, the thing that you've created is no longer you. It's not expressing you or your views. It has its own personality. Now, when it crosses that line, and I can see that's a hard thing to know when it's crossing that line, when it crosses that line, I think it's a different thing. It may be that in 50 years, these are our best friends, and they're like, "Would you give us free speech rights, too?"

And we're like, "Great, yeah, you guys get free speech rights. We'll give you free speech rights, too."

But that's something we should decide on. So the whole point of that piece is to say, "We can't extend automatically the protections of the First Amendment to these highly intelligent systems."

Instead, there's a point at which we ought to be saying, "Okay, no longer extends here, and ordinary regulation can apply here until we say otherwise."

Meetali Jain: And I would just say that it's fascinating to me that these companies are both designing these technologies to basically appear and present as human-like as possible, and then claiming that that human-like manifestation is deserving of the First Amendment's protections, although it's a design choice.

Tristan Harris: Right. It is like you deliberately raise a groomer and you're like, "Well, we didn't choose that it was a groomer."

But you did choose that it was a groomer because the incentives have you optimized for that.

Meetali Jain: Yeah.

Larry Lessig: Right.

Tristan Harris: Now, Larry, I just want to give people more of this thought experiment in your paper, because you give this sort of fictional AI platform, not called Twitter, but called Clogger, that offers computer driven, AI driven content to political campaigns. Just so people have the full thought experiment about replicant speech. Could you just outline that, because I think it presents some interesting gray areas?

Larry Lessig: Yeah. So, the idea is like imagine you develop this AI technology that gets really good at figuring out exactly how to deploy speech in a political marketplace to achieve the objectives of whoever hires it, to do what it's supposed to do. And again, this was all before LLMs, and I was really chilled when I was in a conference once and a senior representative from one of the major LLM companies was asked, "What's the thing you're most afraid of?"

And he says, "That these machines are too persuasive."

And so that's exactly what Clogger was about. Imagine the machine getting to a place that it is so persuasive that it figures out exactly what it needs to do in order to pull somebody over. I mean, again, building on the point you and Asa made, imagine right now a company starts developing a target audience, let's say, men 30 years or younger, that it believes it needs to persuade in the next election to get their candidates elected. And so they start developing these Only Fan AIs, video Ais. And these Only Fan video AIs, they're not talking about politics. They're just going to be spending the next year and a half doing what Only Fan video AIs do with the people that they're engaging with, developing an intimate understanding of the psychology of these 30-something men over the next year and a half.

And then just before the election, they say, "Oh, who are you going to vote for?"

And the person says, "I'm voting for X."

And the Only Fan AI says, "I don't think I can hang anymore with a person who's going to vote for X," right?

Nobody should minimize the power that these devices are going to have over our minds and our psyche and our personality. And that's not to say that we should necessarily ban them, although I would love to ban that particular version of what the AI is, but it is to say, it would be crazy to say that nobody can regulate this, because the First Amendment somehow automatically magically applies and immunizes these companies so that they have a First Amendment right that this guy can be seduced or manipulated by these devices deployed for a purpose he doesn't understand.

Tristan Harris: I think oftentimes we think about persuasion as speech rather than relationship. And as we move to a world of AI relationships, we now need to protect that domain from where there's an asymmetry in the ability of that AI to relate to you in a way that can determine the consequences.

Meetali Jain: And I think it's for that reason that I frankly think that our free speech doctrine, particularly the way that it's evolved politically, is inadequate as it's interpreted now to really deal with this kind of persuasion or manipulation. There are scholars who called for a new exception that's manipulative speech, very aligned with standards that have been crafted for commercial speech and how advertisements, for example, can manipulate consumer's minds.

But also, I wanted to just posit this freedom of thought type of principle that exists within our human rights bodies. The idea that preceding speech, there's the incursion on mental sovereignty, and that we have to look at the ability of people to be able to think and decide for themselves even before they get to the point of being able to speak. And I think if we think about freedom of thought as a principle, as an animating principle that informs how we think of speech, well then I think we start to see how individuals actually could claim a freedom of thought incursion by these tech companies into their inner sanctum, into their mental domain.

Tristan Harris: I kind of want to zoom us out into how do we deal with this broader philosophical, technological, socio-political process by which we can get governance moving at the speed of the technology? And I know that's a really big question, but just curious, now that we've gone into this, as I lay that out, what comes up for you both?

Meetali Jain: I'll say a couple of things that I've been thinking a lot about. I think family law forever has talked about the need for the State to govern how we think about relationships sometimes getting it wrong, there's no doubt, but understanding fundamentally that human relationships are in need of oversight by the State. And I think this is no different. The AI industry early on understood that our society is in a kind of loneliness crisis, an epidemic of sorts, that the Surgeon General has named as such.

And I think that's where we need to get creative about how do we govern relationships even if users don't believe? And in this case, there's no indication necessarily that Sewell believed that Daenerys Targaryen as a chatbot was a real person, but he did believe the relationship was real by all accounts. And so, how do we govern that? And that distinction I think is important because some of what we've seen, the Band-Aid solutions being thrown around by legislators is, "Let's have disclaimers. Let's have more disclaimers on these sites." That's not going to do anything.

Tristan Harris: It doesn't work.

Meetali Jain: Right. That's not going to do anything because the problem is not that people think that this is a real person, the problem is that fundamentally people are being attracted in this kind of crisis of loneliness to finding meaning and relation online.

Larry Lessig: Yeah, that's a great, great point. And I would extend it. I don't have any Character.AI relationships. I spend a lot of time though asking serious questions to AIs. I bounce between a number of them, but serious legal questions. And as I'm doing my work, I will ask these questions, and I'll have this ongoing engagement. And I, at one point, had this fearful moment recognizing that this was actually the most interesting conversation I was going to have that day, there it was with this machine.

And so, there's no doubt we are racing down a path that will bring us closer and closer to these machines. And so, the first point that we've been talking about from most of this podcast is the most obvious point, that we should not be blocked from being able to intervene in those regulations in ways that make sure that we protect each other and protect our society. Like the First Amendment should not stop us from being able to respond to the threats that we discover when we discover how we're going to be interacting with these devices. That's number one.

But I think equally as important, something else Meetali said right at the very top, the other problem we've got in America right now is that we don't believe in regulation either. This is all extremely stupid, because if we don't develop the capacity to figure out how we should be regulating and regulating where we need to be regulating, not in the context of products that are harming people, but in context where we can see we don't have background law that can solve the problem, we need to actually create a law. If we don't develop that understanding, then that's just as bad as if the First Amendment was blocking everything. Because then we're not going to be able to respond.

I think what we need to develop is practical, pragmatic understandings of how these things are affecting our lives. We're living real lives right now, and do we want them to be affecting our lives in this way? I was inspired, Tristan, by your and Asa, the whole work that you were doing around social media, because there's a context where we can clearly see a technology affecting people's lives, especially our children's lives that we should be doing something about. And still, how many years later, we've not done anything effectively about that.

Meetali Jain: Just picking up on that example of children harmed by social media, I think so many of us, we're focused on trying to remedy the kinds of harms using a design-based approach to social media to surmount First Amendment Section 230 claims that meanwhile the generative AI industry just raced ahead, really kind of took the rug out from underneath our feet. And when I first received that email from Megan, I had this kind of chill that this is the case that we had suspected in a very ephemeral hypothetical sense, but it's here. We haven't even started to solve the problem of social media. And meanwhile, here are our kids being victimized, harmed by generative AI technologies. And there's really no end in sight. I do think the fact, for example, that Gemini have introduced these chatbots for under-13-year-olds is very bold. But to me that's kind of an indication of where the industry is in terms of its feeling of being able to act with utter and complete impunity.

In our case, for example, I think the fact that they've kind of asserted listeners' rights has allowed them through the back door to get to the same place as asserting affirmatively that chatbots have free speech rights. That, they were not willing to do, but by claiming it through their listeners' rights, through their users', listeners' rights, they're effectively at the same place should a court grant them that. And that to me is a terrifying concept. Because if it's AI that we have to contend with in terms of liability, what does that mean then for companies that are actually developing these products? It means that they're off scot-free and that we have to, I guess, go to a chatbot if we have some sort of grievance to pick with it and that that's not tenable.

Tristan Harris: Yeah, you can always bring your complaints to the Character.AI customer service chatbot, I'm sure. It'll give you all the right answers. So given everything that we've just discussed, I just wanted to frame a moment for solutions and interventions. Where should those who care about this, lawyers, policymakers, those who want to advance this work or assist you, where should we be putting our collective attention? What levers should we be advancing?

Larry Lessig: I think the most important thing to do is to take this conversation away from the lawyers and away from technology experts, and to bring ordinary people into the conversation. I've been pushing a platform we bought and open sourced to facilitate deliberation, virtual deliberations among people. I think we need millions of examples of that type of engagement so that we begin to have ordinary people recognizing the threat and thinking and seeing and feeling the potential. And then, once we have a clear sense of what the people think, then they can hire the experts, the lawyers, to enforce those views. But if we start with the lawyers, then I fear that we're going to run down a path that will make it impossible for us to achieve what ordinary people actually want to of these technologies. So that's the kind of work I think I would push.

Meetali Jain: I agree that the court of public opinion is probably the most important court to generate that mobilization that's needed amongst people to understand what's happening right before their eyes, and to understand it with some degree of granularity so that they can actually make decisions about whether they want to allow this technology to rule their lives. And I think that Megan, the plaintiff in our case, has formed her own foundation to educate other parents particularly, but in addition, other important stakeholders in young people's lives, educators, health professionals, et cetera, about the dangers of AI technologies.

And so, I do think we should be turning to such efforts and really increasing the number of platforms and the reach to get the message out far and wide. When I first met Megan, I asked her, "What is most important to you? What do you want?"

And she said, "I want to sound the alarm far and wide." And that's something that we've been trying to do that this podcast, of course helps us to do. And we'll continue to seek out those opportunities, because a case is just a case in one court before one judge or a panel of judges, but public education is far more important.

Tristan Harris: Larry and Meetali, thank you so much for coming on Your Undivided Attention and walking us through these really critical things for people to know about. Just want to tell listeners that you should follow Meetali's work at the Tech Justice Law Project and Larry Lessig online. We're so grateful for you being with us.

Larry Lessig: Great. Thanks for having us.

Meetali Jain: Thank you. Thanks, Tristan.

![[ Center for Humane Technology ]](https://substackcdn.com/image/fetch/$s_!uhgK!,w_80,h_80,c_fill,f_auto,q_auto:good,fl_progressive:steep,g_auto/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F5f9f5ef8-865a-4eb3-b23e-c8dfdc8401d2_518x518.png)

![[ Center for Humane Technology ]](https://substackcdn.com/image/fetch/$s_!sWu_!,w_152,h_152,c_fill,f_auto,q_auto:good,fl_progressive:steep,g_auto/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F1ad32ec0-5e00-4151-ab93-0e8a86daba46_5264x3424.jpeg)