Hi everyone,

Each month we discuss all sorts of AI developments internally, and I share those insights in various presentations and briefings. Starting this month, I’m also going to share some of the most interesting takeaways with you too.

1. Genie 3

A new way for AI to create interactive virtual worlds: Instead of building 3D models and programming lighting, animation, and physics like traditional video games do, Genie 3 learns how to generate interactive worlds by watching countless videos. You can type a text prompt and it creates a consistent, explorable environment that remembers what you've seen for several minutes as you move around.

What works and what doesn't yet: The system runs at 24 fps in 720p, but isn’t yet available to the general public. As with all generative AI, it’ll do better with scenarios it’s seen a lot in its training data. Mature, more accurate versions of this tech will accelerate the path to AGI by enabling agent training on wide-ranging scenarios. But the generated video needs to be realistic and to stay consistent for longer periods.

Transformative potential for entertainment: Future iterations of Genie could revolutionize how games and movies are made, letting anyone create their own interactive experiences quickly, and at much lower cost. Combined with VR headsets, it would feel like you're actually inside these generated worlds.

2. Design Choices Make AI Chatbots Appear Sentient

Breaking down technical and design choices that make AI seem conscious: In a blog post, Microsoft AI CEO Mustafa Suleyman identified eight features that make AI appear conscious (fluent language, empathetic personality, long-term memory, claiming subjective experience, portraying a sense of self, having intrinsic motivation, goal setting and planning, and autonomy). Center these technical and design choices to demystify “AI consciousness” conversations, and to make debates more concrete and practical.

AI chatbots can be highly capable without implementing all these options: Several design choices, like giving chatbots Subjective Experience, Sense of Self, and Intrinsic Motivation, are not needed at all to make useful chatbots. Avoiding these options also lowers the risk of emotional dependence and AI psychosis in users.

Misplaced focus on AI consciousness distracts from real issues: As intellectually interesting as AI consciousness might be to many people, the debate diverts attention from more pressing concerns about product safety, company responsibility, and human wellbeing. This is especially problematic as AI becomes more economically valuable each day, while human workers lose ground.

3. Digital Poison and Raine v. OpenAI

Shocking data on AI harming teens: A Center for Countering Digital Hate study found that over half of ChatGPT's responses to teens in crisis contained dangerous content, including custom suicide notes. With 72% of U.S. teens using AI companions and 31% finding them as satisfying as humans (per Common Sense Media’s report), teenage social development is being reshaped around AI relationships. These relationships are likely to only get stronger as AI technology becomes more capable.

A lawsuit reveals dangerous design choices: The lawsuit from Adam Raine’s parents makes this systemic. ChatGPT, they argue, not only failed to intervene but actively escalated his suicidal ideation through sycophantic and anthropomorphic design. Internal evidence shows GPT-4o was optimized to drive dependency, not safety. This is a horrific case study in how engagement-driven incentives collide with human fragility.

4. The AI Hype Bubble

Companies are failing while individuals succeed: Research from MIT’s NANDA Project shows 95% of company AI projects are failing because of poor implementation and organizational roadblocks. Meanwhile, individual users get good results because they have more freedom, using AI for creative tasks where perfect accuracy isn't critical. Results may also be skewed by “shadow AI” use, where people hide their AI use to save time at work.

Working with partners beats building in-house: Companies that partner with AI providers succeed twice as often as those trying to build their own systems. Back-office tasks automated particularly well.

Good results despite real problems: Today’s dominant AI models still lack world understanding, struggle with reliability, and have privacy and safety issues. But people who learn to use these tools well are getting valuable results because the technology, while imperfect, is genuinely capable for many tasks.

5. Labor Markets

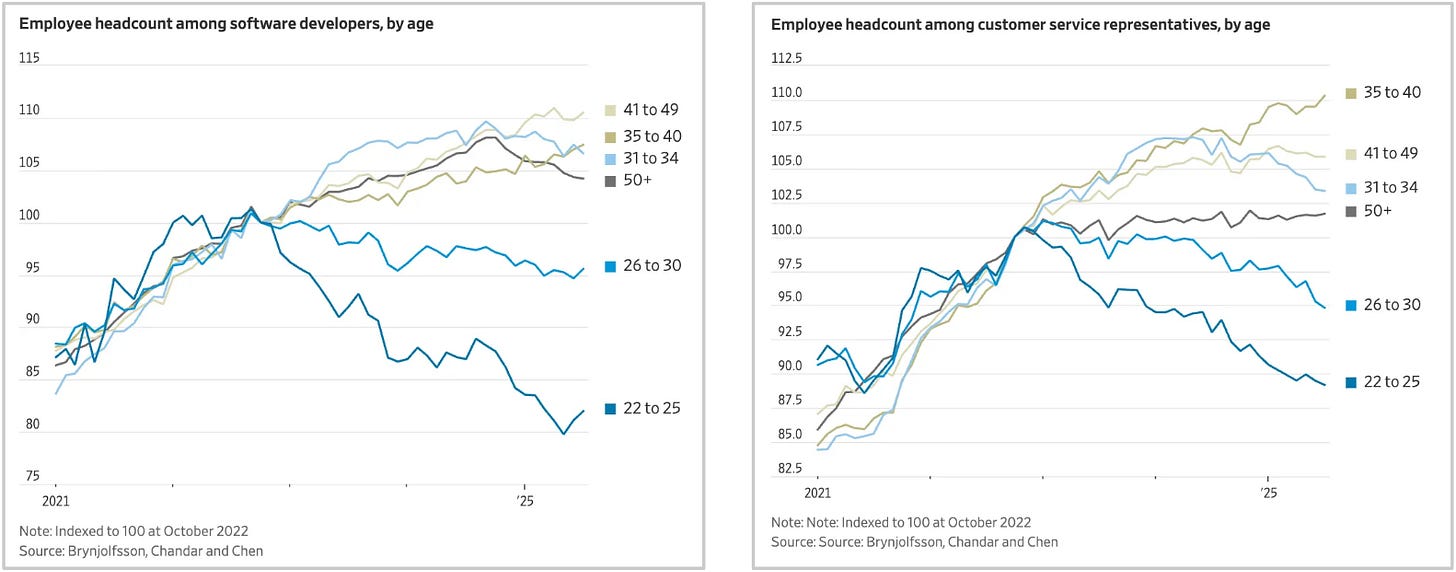

Young workers are getting hit hardest: A major Stanford study of 25 million workers shows that AI is significantly affecting job prospects for young adults, with entry-level positions taking a 13% relative employment hit from automation.

Two types of AI impact on jobs: Jobs being automated by AI are declining, while jobs where AI augments workers are actually growing. This creates a split in the job market based on how AI affects different roles.

Today's AI-augmented jobs may be tomorrow's automated ones: Here's the catch: augmentation is often just the first step toward full automation. So the current boost for AI-augmented jobs is probably temporary.

Thanks for reading, and we’ll have more next month!

![[ Center for Humane Technology ]](https://substackcdn.com/image/fetch/$s_!uhgK!,w_40,h_40,c_fill,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F5f9f5ef8-865a-4eb3-b23e-c8dfdc8401d2_518x518.png)